Is the “AI Bubble” Bursting, or Is This Just the Middle of the Story?

I’ve been noticing a shift lately in how people are talking about AI. More headlines. More hot takes. A growing sense of “see, we told you so.” As if the whole thing was always destined to collapse under its own weight.

I don’t see it that way.

The Early Rush of Innovation

When something genuinely new enters the market, especially something as powerful and open-ended as AI, excitement is part of the deal. People rush in with ideas. Some are thoughtful, some are half-baked, some are wildly ambitious. That’s usually how innovation shows up at the beginning, messy, noisy, and full of creative energy. A lot of motion, a lot of noise, and a lot of experimentation. And that’s a good thing. It’s usually a sign that creative visionaries are paying attention and feeling inspired enough to test what’s possible.

And yes, many of those ideas won’t go anywhere. They were never meant to. That doesn’t mean the technology itself was a mistake. It just means we’re doing what humans have always done when a new tool becomes available, we probe, we poke, and we learn by overreaching a little. We test its limits and push the boundaries and sometimes we push a bit too far.

Patterns in the Cycle

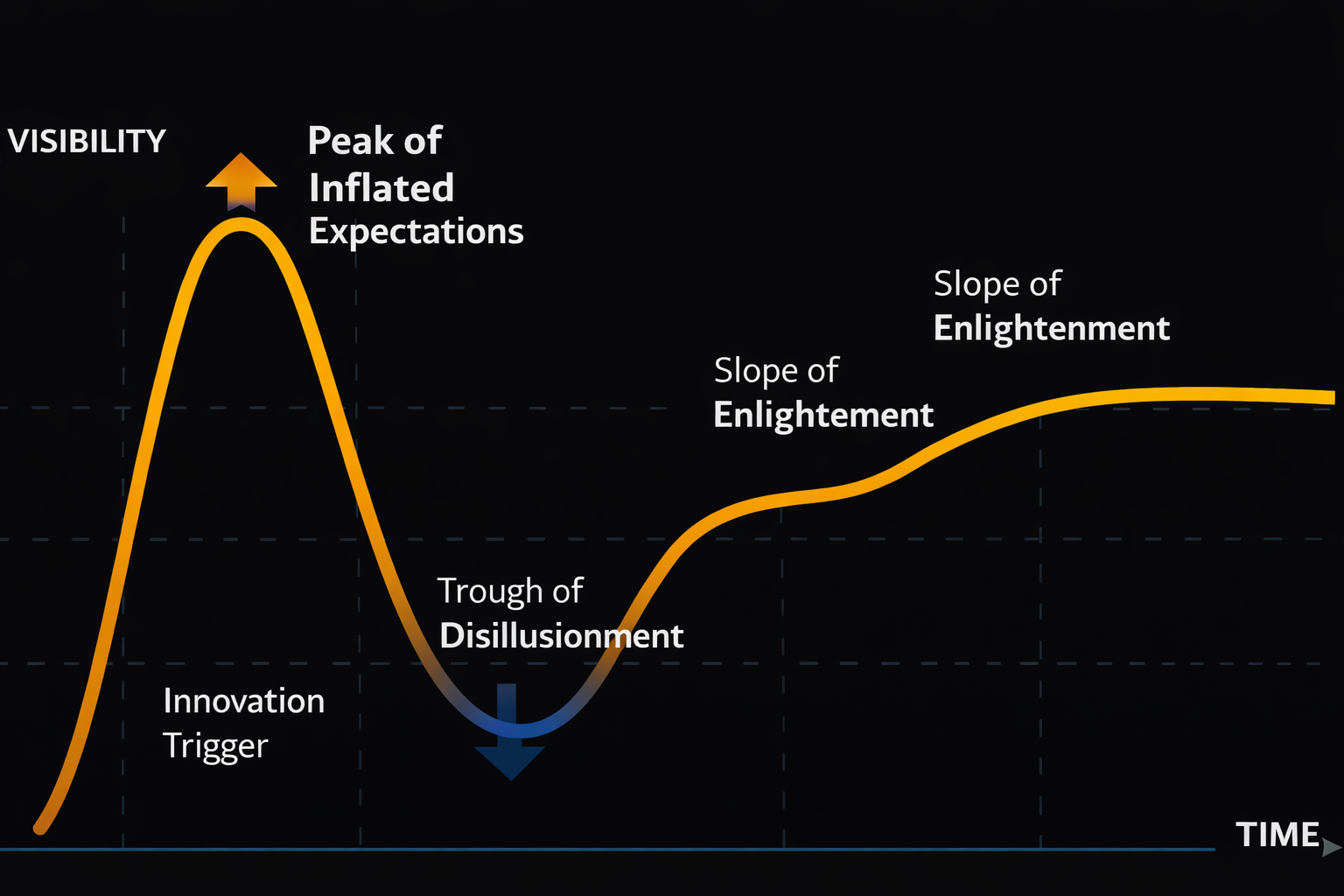

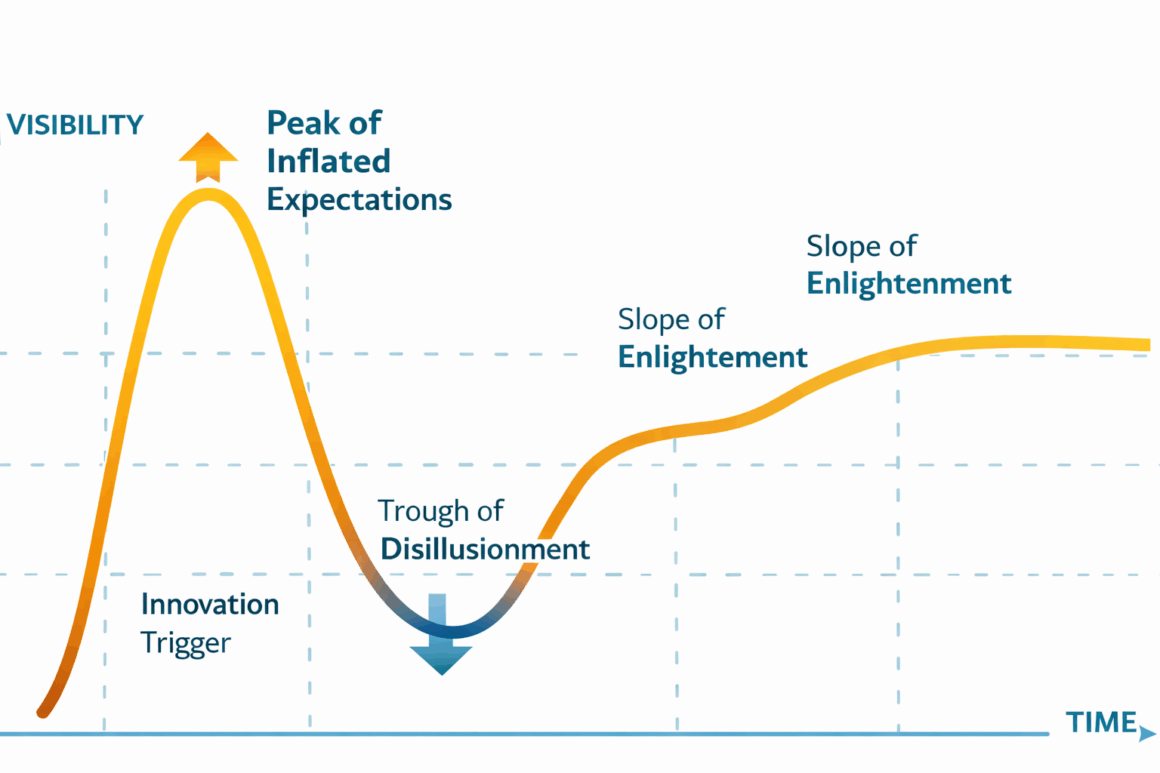

This pattern isn’t random, and it’s not unique to AI. It’s something researchers and business strategists have been tracking across technology cycles for decades. One of the frameworks used widely in industry is called the Gartner Hype Cycle. It shows how emerging technologies typically move through a phase of inflated expectations, followed by a trough of disillusionment, and then toward a more productive, stable maturity as real use cases emerge. That’s exactly the kind of “up, down, and then onward” rhythm we see with new tools like AI, the early excitement explodes, reality kicks in, and over time, the technology settles where it actually delivers value.

Globally, only about one in ten startups survives long term, meaning roughly 90% of new ventures don’t make it in the long run. That trend holds across industries and geographies, reflecting how new ideas and organizations undergo a sort of natural selection as markets sort out which models truly deliver value.

From Hype to Real Value

So when people talk about the “AI bubble bursting,” what I actually see is a transition point. We’re moving out of the phase where AI gets layered onto everything by default, and into a phase where we start asking better questions. Where does this actually help? Where does it add friction? Where is it useful, and where is it unnecessary? Does it provide ROI in its application?

That kind of discernment only comes with time.

The tools themselves are still evolving too. Developers are learning. Users are learning. The feedback loop is tightening. That’s progress, unspectacular, steady progress. That’s exactly what you’d expect if something is settling into its real role rather than trying to be everything all at once.

What’s also happening quietly, and often overlooked in the louder “bubble” conversations, is a learning curve around responsibility. As AI tools become more accessible, people building and using them are being forced to confront questions that go beyond performance and efficiency. Questions about data, about bias, about unintended consequences, about environmental cost. That kind of reckoning doesn’t usually happen at the beginning of an innovation cycle. It tends to emerge once the initial excitement fades and the real-world implications become harder to ignore.

I see this as another sign of maturation. Developers, organizations, and leaders are starting to make more conscious choices about how and where AI is used, and just as importantly, where it shouldn’t be. There’s a growing recognition that AI is not neutral, and that how it’s designed, trained, and deployed matters. Balancing technological capability with ethical judgment, human oversight, and environmental consideration isn’t a constraint on innovation. It’s what gives innovation staying power. Without that balance, the tool may move fast, but it won’t move wisely.

A Necessary Recalibration

What I don’t buy into is the idea that this moment somehow proves AI was a dead end from the start. If anything, it feels like a necessary correction. A pause. A recalibration.

AI, at its best, was never meant to replace human thinking. Or rather, I don’t see that way. It’s a tool. A powerful one, yes, but still a tool. Without human critical thinking, human judgment, context, and intention, it doesn’t really go very far. The value shows up when the two are paired thoughtfully and mindfully.

So no, I don’t see this as a collapse. I see it as a moment of clarity, a sorting out process where both builders and users get more intentional about what actually matters, and what was just noise.